Earlier this year, Adrian Courrèges wrote an article detailing his findings while reverse engineering the rendering pipeline in Deus Ex: Human Revolution.

Starting from a given frame, he illustrates the different stages in the rendering: creation of the G buffer, shadow map, ambient occlusion, light prepass, how opaque and transparent objects are treated differently, volumetric lights, bloom effect in LDR, anti-aliasing and color correction, the depth of field, and finally the object interaction visual feedback.

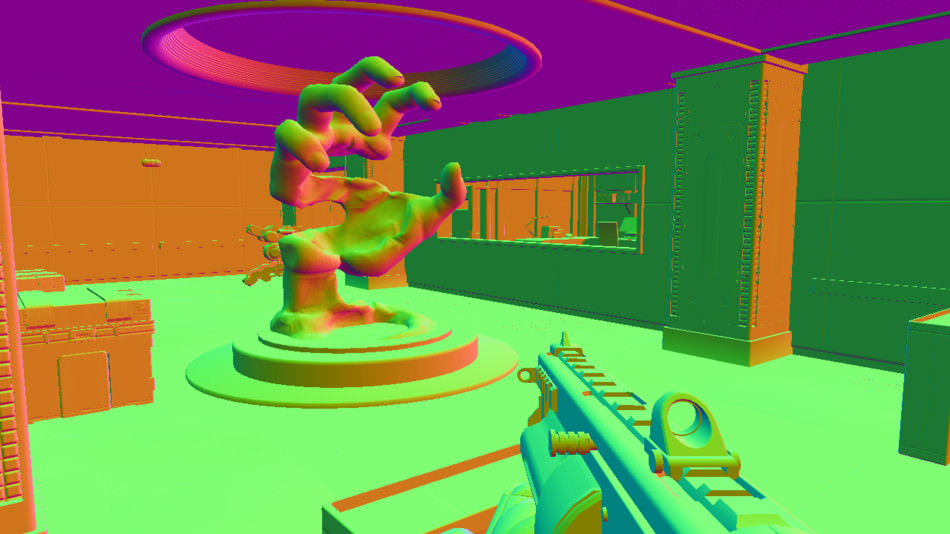

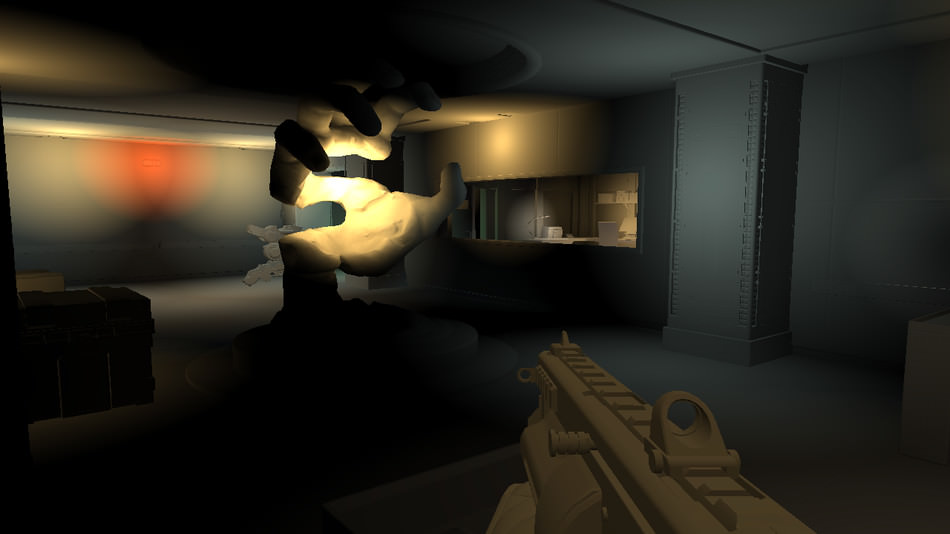

Here are a few screenshots stolen from his article:

Update:

Adrian since then posted a new article, this time breaking down the rendering of a frame in Supreme Commander. The comments also include insights from the programmer then in charge of the rendering, Jon Mavor.